What is the Google BERT update?

(4 minutes read)

Bidirectional Encoder Representations from Transformers, or short BERT, is a new Google update recently released. BERT helps Search to better understand the context of a query. It means it is no longer looking word by word, rather it looks at the whole context. BERT is not just a software improvement as it also requires better hardware. Google uses the latest Cloud TPUs to deliver search results and get relevant information faster. Google stated that BERT is the biggest update in the last five years and brings a big change in search history in general.

Why BERT update is so important for us?

Well, the thing is that often people are not actually sure what they are looking for and start queries without writing keywords. The main goal of Search is to understand language and make it possible for the user to search in the most natural way to them. That’s why BERT is so important as it analyses the whole context and even gives big importance to small words like “for“ or “to“. Those small words can sometimes change the whole meaning of the sentence.

BERT affects 10% of the queries. It will help to better understand 1 in 10 searches in English and soon it will bring a lot of improvements in other languages as well.

According to the Search Engine Journal (SEJ), BERT will make things harder for pages with poor content. The whole context in which words with double meanings are used is going to become very important.

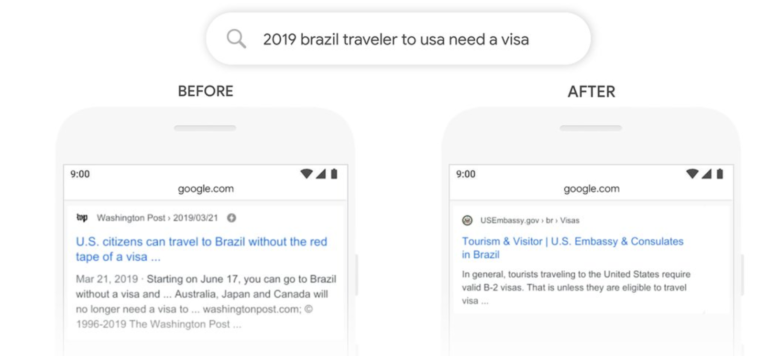

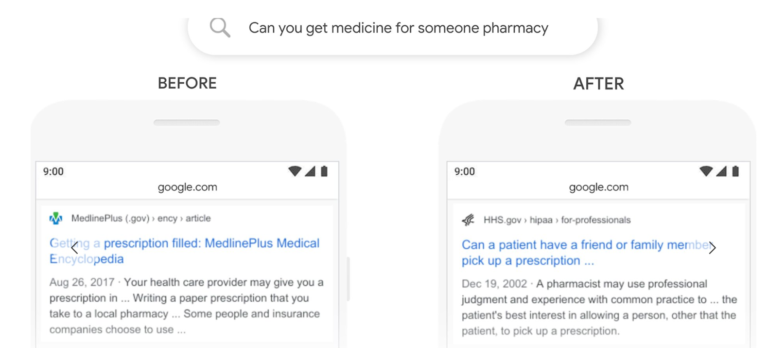

Here are some examples of how BERT influenced Search:

In this example we can see that Search didn’t take into consideration the word “to”, and it outputted irrelevant information for the search query.

And in this example, we can see that before it didn’t consider the words “for someone”. This will now change.

On his blog, Pandu Nayak (Google Fellow and Vice President of Search) said that although BERT has improved how the search engine understands and interprets queries, it still needs improvements.

It is just the beginning of a revolutionary but long process.

How Google BERT update is going to affect SEO?

This is an update that affects content, it has less to do with the technical side. It has been noticed that BERT has affected in particular longer and conversational based searches.

It will have an impact on a featured snippet result (position zero). In fact, Google itself has categorically stated that the BERT algorithm is trained to understand appropriate featured snippet results. As featured snippets are being used in the 99% of results in voice-search, we will have to pay attention to how prepositions are used and give more importance to snippet optimization in general.

If you do see a big impact on your website traffic, make sure to see which search keywords are affected on SERP. Check if your snippet still positions on the certain query, make sure you check how it is written. If your competitor is ranking better observe what he has done “better” and differently. Thus, make a content plan to outperform your competition.

The main take away is to use more structured and clear sentences, and focus on writing better quality content for the final user, i.e. a human being, not for the machine!

Author: Borka Hajdin

Published: 30.10.2019.